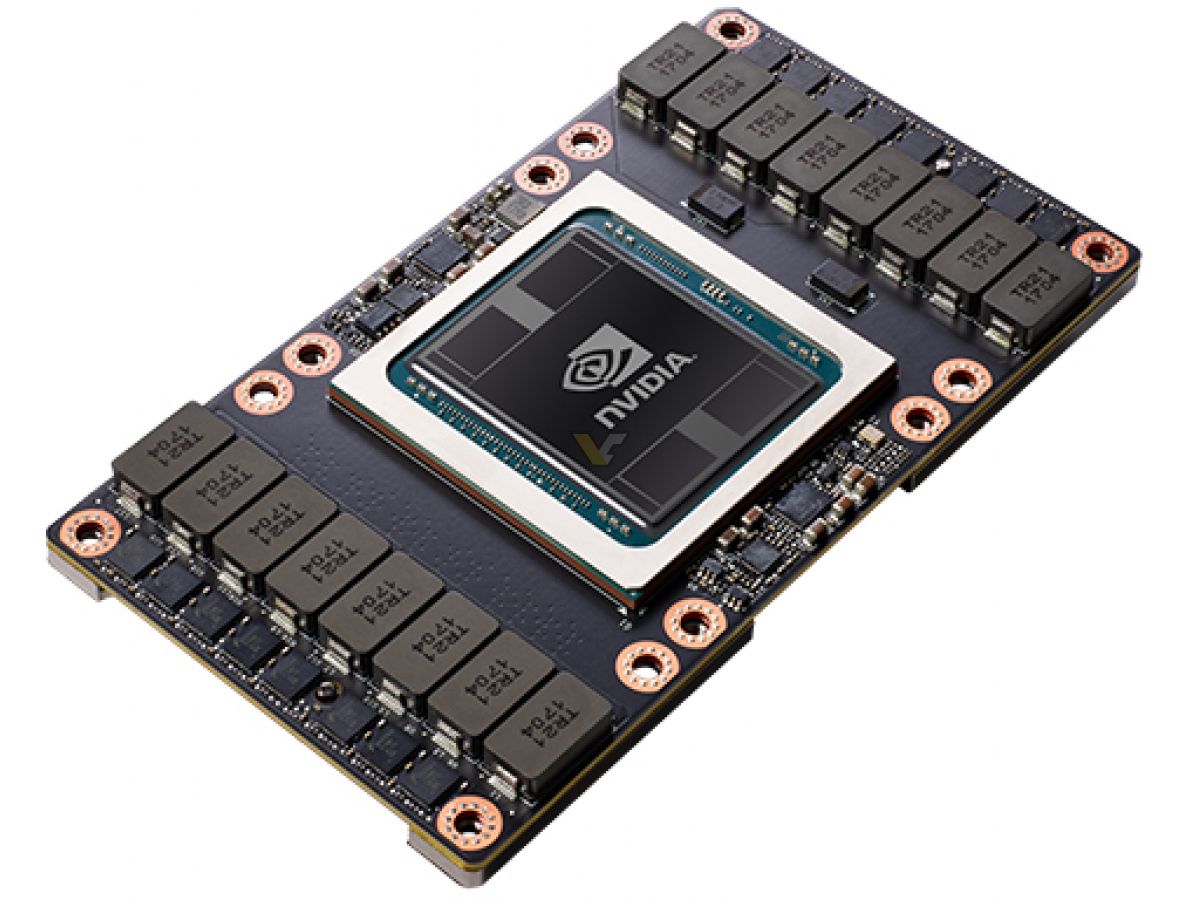

Transformer models are the backbone of language models used widely today from BERT to GPT-3.Transformer Engine Supercharges AI, Up to 30x Higher Performance While the sparsity feature more readily benefits AI inference, it can also improve the performance of model training. Tensor Cores in H100 can provide up to 2x higher performance for sparse models. Not all of these parameters are needed for accurate predictions, and some can be converted to zeros to make the models “sparse” without compromising accuracy. AI networks are big, having millions to billions of parameters.The Sparsity feature exploits fine-grained structured sparsity in deep learning networks, doubling the performance of standard Tensor Core operations. On a per SM basis, the Tensor Cores deliver 2x the MMA (Matrix Multiply-Accumulate) computational rates of the A100 SM on equivalent data types, and 4x the rate of A100 using the new FP8 data type, compared to previous generation 16-bit floating point options. New fourth-generation Tensor Cores are up to 6x faster chip-to-chip compared to A100, including per-SM speedup, additional SM count, and higher clocks of H100.Implemented using TSMC's 4N process customized for NVIDIA with 80 billion transistors, and including numerous architectural advances, H100 is the world's most advanced chip ever built.

H100 securely accelerates diverse workloads from small enterprise workloads, to exascale HPC, to trillion parameter AI models. The NVIDIA H100 Tensor Core GPU powered by the NVIDIA Hopper GPU architecture delivers the next massive leap in accelerated computing performance for NVIDIA's data center platforms.It features major advances to accelerate AI, HPC, memory bandwidth, interconnect and communication at data center scale. Built with 80 billion transistors using a cutting edge TSMC 4N process custom tailored for NVIDIA's accelerated compute needs, H100 is the world's most advanced chip ever built.These technology breakthroughs fuel the H100 Tensor Core GPU - the world's mostadvanced GPU ever built. The inclusion of NVIDIA AI Enterprise (exclusive to the H100 PCIe), a software suite that optimizes the development and deployment of accelerated AI workflows, maximizes performance through these new H100 architectural innovations. Second-generation MIG securely partitions the GPU into isolated right-size instances to maximize QoS (quality of service) for 7x more secured tenants. NVIDIA NVLink supports ultra-high bandwidth and extremely low latency between two H100 boards, and supports memory pooling and performance scaling (application support required). Hopper builds upon prior generations from new compute core capabilities, such as the Transformer Engine, to faster networking to power the data center with an order of magnitude speedup over the prior generation.

The NVIDIA Hopper architecture delivers unprecedented performance, scalability and security to every data center. The inclusion of NVIDIA AI Enterprise with H100 PCIe purchases reduces time to development and simplifies deployment of AI workloads, and makes H100 the most powerful end-to-end AI and HPC data center platform. With Hopper Confidential Computing, this scalable compute power can secure sensitive applications on shared data center infrastructure. For small jobs, H100 can be partitioned down to right-sized Multi-Instance GPU (MIG) partitions. H100 accelerates exascale scale workloads with a dedicated Transformer Engine for trillion parameter language models. The NVIDIA ® H100 Tensor Core GPU enables an order-of-magnitude leap for large-scale AI and HPC with unprecedented performance, scalability, and security for every data center and includes the NVIDIA AI Enterprise software suite to streamline AI development and deployment. NVIDIA H100 PCIe Unprecedented Performance, Scalability, and Security for Every Data Center

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed